Cancer is one of the leading causes of death worldwide, with millions of new cases diagnosed every year. The key to improving survival rates is early detection, as cancers caught in their initial stages are significantly more treatable. Traditional diagnostic methods, such as biopsies, CT scans, MRIs, and mammograms, have limitations in accuracy, speed, and accessibility.

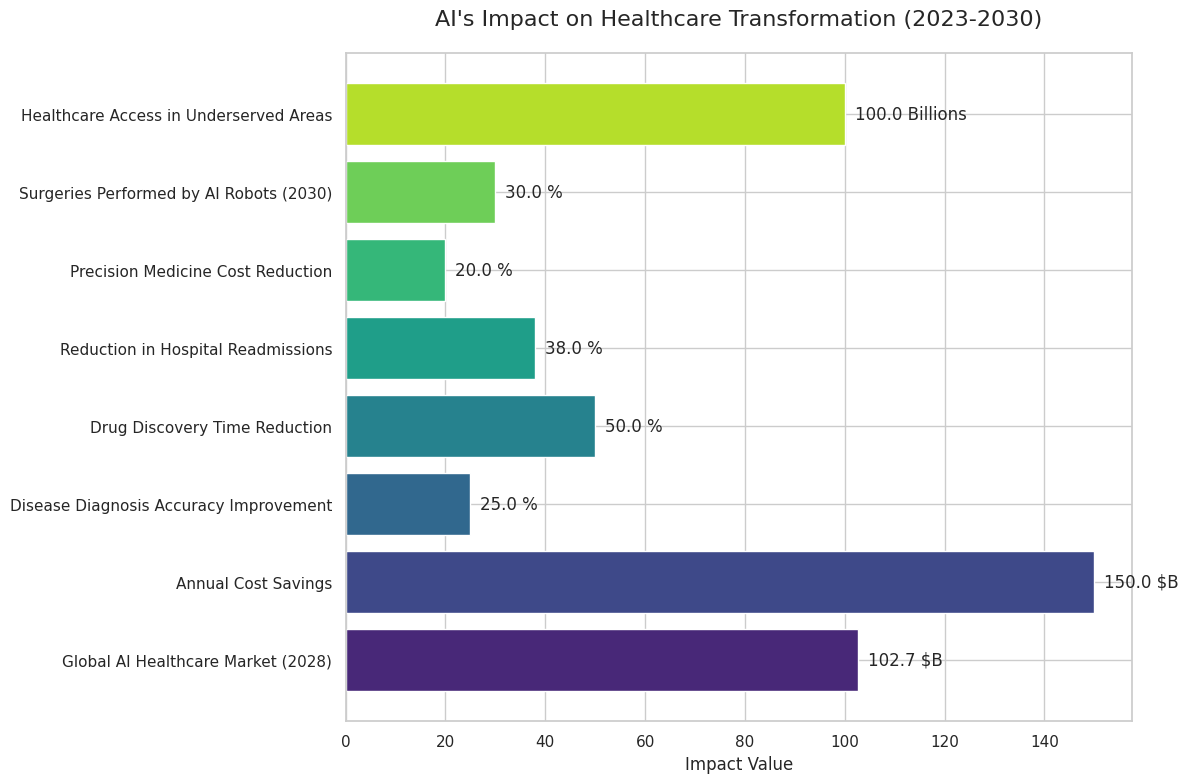

This is where Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL) are making a creative impact. AI-driven cancer detection systems are improving accuracy, reducing diagnostic time, and making cancer screening more accessible to populations worldwide. This blog explores how AI is transforming early cancer detection, its history, current advancements, and future potential.

A Brief History of Cancer Detection

Before modern medical imaging, cancer detection relied heavily on physical symptoms and biopsy procedures. By the late 19th and early 20th centuries, X-rays and microscopy became essential tools for identifying abnormal growths. However, misdiagnosis rates were high due to human limitations in analyzing medical images.

Continue reading “Using AI to Detect Cancer at an Early Stage: Transforming Diagnosis and Treatment”